Introduction

Your marketing team just launched an AI Agent to handle customer inquiries. Sales deployed another one for lead qualification. IT is testing agents for ticket routing, and finance has a bot reconciling invoices.

But here’s the catch: nobody coordinated these AI Agent deployments. Nobody checked if they overlap. And the real issue? Nobody knows exactly how many autonomous AI Agents are operating across your organization right now.

This is what’s known as AI Agent sprawl, and it’s fast becoming one of the biggest governance challenges facing enterprises today. Unlike traditional software, which waits for human commands, AI Agents operate autonomously. They make decisions, access data, trigger workflows, and interact with your systems without the constant need for human oversight.

When these autonomous systems proliferate without proper governance, the consequences extend far beyond inefficiency. You’re exposed to security vulnerabilities, compliance risks, unpredictable costs, and operational chaos, risks that can significantly harm your competitive edge.

The irony? Most organizations implement AI Agents to boost efficiency and drive innovation. But without the right AI governance frameworks in place, what was meant to bring innovation can quickly turn into unmanageable complexity.

This isn’t about halting AI adoption. It’s about implementing it intelligently with full visibility, governance, and control from day one. Enterprises that master how to manage AI Agent sprawl will gain a competitive advantage over those still grappling with chaos.

In this article, we’ll learn what AI Agent sprawl truly means, why it happens so easily, and, most importantly, how to take control before these autonomous AI systems start controlling you.

What Is AI Agent Sprawl?

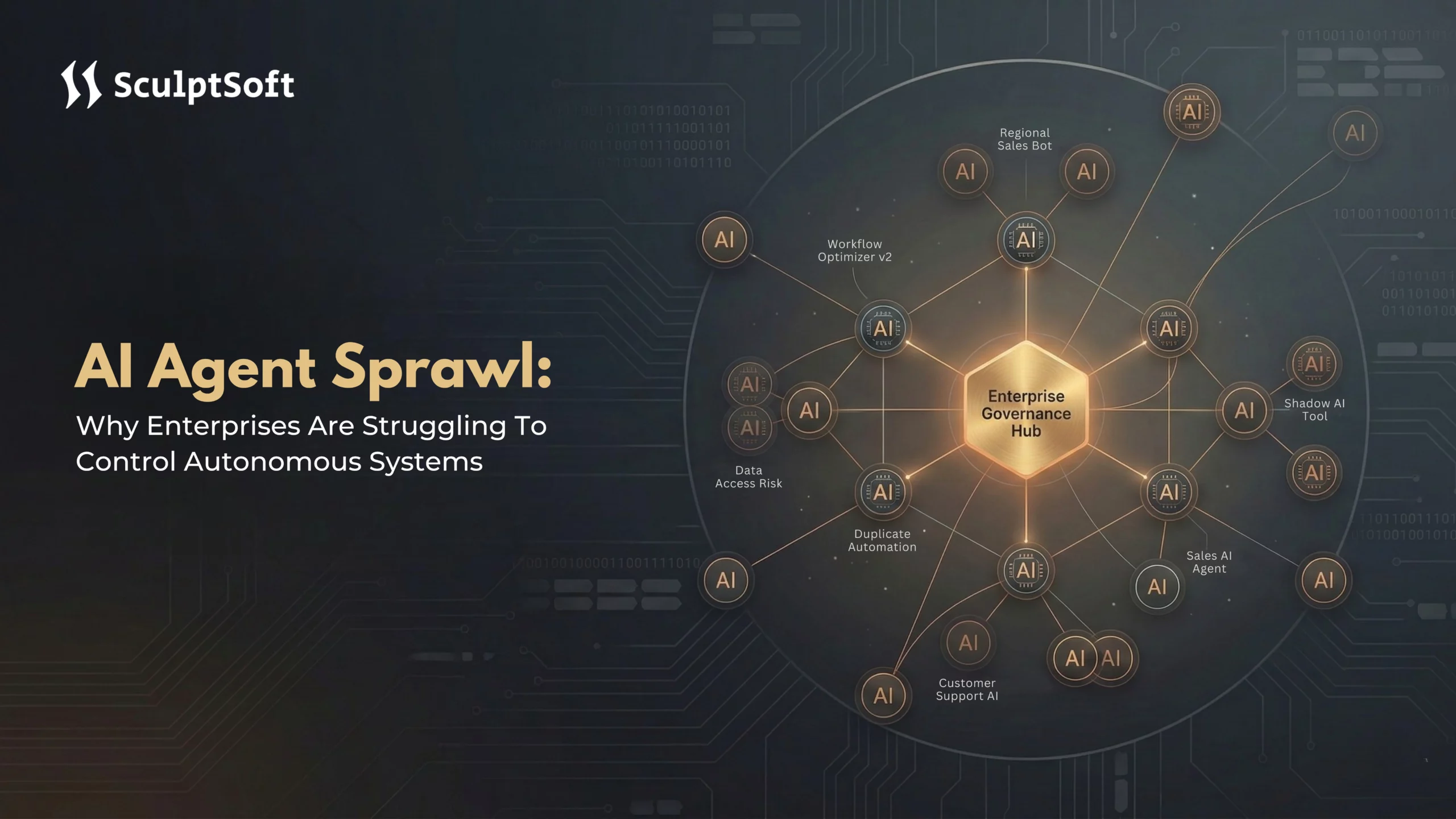

AI Agent sprawl occurs when autonomous AI systems spread across your organization without centralized governance, oversight, or visibility. Think of it as the AI version of shadow IT, but far more complex and potentially dangerous.

In simple terms, AI Agent sprawl happens when different departments, teams, or individuals deploy AI Agents on various platforms without a unified management strategy. This results in a fragmented ecosystem of autonomous tools that nobody fully controls, leading to inefficiency and risks.

The Key Difference: Autonomous vs. Passive

Traditional software is passive, it waits for human input. You open an application, enter data, and click buttons to make it work. Even automated scripts follow predetermined rules and only operate when instructed.

AI Agents, on the other hand, are autonomous systems that can:

- Make decisions based on context and changing conditions.

- Access data across multiple systems without human intervention.

- Chain actions together autonomously, without explicit instructions.

- Learn from interactions and adjust their behavior accordingly.

- Operate continuously, without requiring human supervision.

This autonomy is what makes AI Agents so powerful. But it’s also what makes them difficult to manage and control.

Why AI Agent Sprawl Is Different From Shadow IT

While shadow IT involved software being purchased and used without IT approval, the risks of AI Agent sprawl are far more impactful:

- Shadow IT is passive, it sits idle until someone uses it. AI Agents, however, are active; they run continuously, make decisions, and take actions on their own, without oversight.

- Shadow IT primarily affects procurement, leading to wasted money on duplicate software licenses. AI Agent sprawl, however, impacts operations altering data, triggering workflows, and affecting customer experience in real-time.

- Shadow IT creates visibility gaps since you’re unaware of the software being used. AI Agent sprawl creates accountability gaps: you don’t know what decisions your agents are making or why.

How AI Agent Sprawl Looks in Practice

Let’s say your sales team builds an AI Agent to update customer records after every interaction. Meanwhile, marketing creates its own AI Agent that also updates customer profiles based on engagement data. Customer service deploys an agent that modifies the same records when handling support tickets.

Now, you have three autonomous systems each built on different platforms with different logic – all trying to update the same customer data. They conflict, duplicate efforts, and nobody knows which agent made which change, or why.

That’s AI Agent sprawl in action, and it’s happening across industries right now.

How Does AI Agent Sprawl Happen In Organization?

AI Agent sprawl occurs because AI Agents are easy to deploy, and organizational structures haven’t caught up with this new reality.

1. The Shadow AI Problem

AI Agent sprawl often begins when a department faces a repetitive task. Someone discovers an AI Agent builder, either through a vendor they already work with, a free trial, or as part of software they’re already using. Within hours, an agent is created to automate the task. It works, saves time, and is deployed without involving IT, security, or compliance teams.

This happens easily because no-code and low-code platforms have made it possible for non-technical users to create AI Agents. Managers and department heads can build and deploy agents without needing central IT approval, especially when department budgets cover these tools. Additionally, the pressure for quick results makes waiting for formal approval feel unnecessary, especially when competitors are already using AI solutions.

2. The Marketplace Explosion

Every major platform now offers AI Agents, including Microsoft Copilot Studio, Salesforce Agentforce, Google Vertex AI, AWS Bedrock Agents, and OpenAI GPT. Beyond enterprise platforms, hundreds of specialized AI Agent tools are available for specific use cases such as customer service, data analysis, content creation, and workflow automation.

This explosion of tools contributes to AI Agent sprawl as different teams choose platforms based on convenience or familiarity. Each platform has its own governance model, security protocols, and integration methods, making it challenging for AI Agents built on separate systems to work together seamlessly. Integration issues arise, leading to governance inconsistencies.

3. The "Build Fast, Govern Later" Mentality

There’s tremendous pressure on teams to deploy AI Agents quickly. Leadership expects rapid results, and competitors are moving fast. This “build fast, govern later” mentality causes teams to prioritize speed over governance.

As a result, teams deploy AI Agents to solve immediate problems but fail to ask key governance questions such as:

- How will we monitor this agent long-term?

- What happens if it makes a mistake?

- How does it integrate with our existing systems?

By the time these questions are raised, multiple AI Agents are already in production, creating risks that are difficult to mitigate.

4. When Convenience Trumps Governance

Creating AI Agents is simple and quick, taking just a day to deploy. However, building proper governance frameworks takes weeks or even months. This mismatch in timelines leads to AI Agent sprawl almost by default. Governance takes a backseat to the urgency of deploying agents to solve immediate business needs.

5. The Knowledge Fragmentation Problem

As different teams develop their own AI Agents, knowledge becomes fragmented. Sales, marketing, and IT each know about their respective agents, but no one has a complete view of what’s running across the organization.

When the creator of an agent leaves the organization, the agent becomes orphaned, still running, still accessing data, but without any oversight or understanding of how it functions. This fragmentation makes AI Agent sprawl progressively harder to manage over time, compounding the issue.

What Are The Risks of AI Agent Sprawl?

AI Agent sprawl isn’t just an operational inconvenience, it creates significant business risks that impact security, compliance, financial performance, and operational stability.

1. Security Vulnerabilities That Multiply

Each AI Agent represents a potential entry point for security threats. When agents proliferate without oversight, your attack surface grows exponentially.

- Manipulation risk: Prompt injection attacks can trick agents into revealing sensitive data or executing unauthorized actions. Traditional security tools are often unprepared for this growing threat.

- Overly permissive access: AI Agents may have broader access than needed. An agent that should only read customer data might inadvertently have write permissions across systems.

- Poor isolation: Without proper sandboxing, a compromised agent can cause cascade risks, leaking data, corrupting records, or triggering unintended workflows.

- Ripple effect: Unlike traditional software vulnerabilities that affect a single application, AI Agents interact with multiple systems autonomously, spreading security issues throughout the infrastructure.

2. Compliance Nightmares

AI Agent sprawl makes regulatory compliance almost impossible. Frameworks like GDPR, HIPAA, CCPA, and the EU AI Act require transparency and control over AI systems.

- Lack of visibility: If you don’t know which agents are running, you can’t explain what data they access or how they make decisions critical for compliance.

- Fragmented audit trails: Different platforms log events in varied ways, complicating audits or investigations and creating gaps in accountability.

- Data governance breakdown: Without central oversight, different agents may handle data inconsistently, failing to meet data residency or privacy requirements.

This challenge intensifies in regulated industries like healthcare (HIPAA) or finance, where non-compliance can have serious consequences.

3. The Hidden Financial Impact

AI Agent sprawl affects your budget in multiple ways:

- Duplicate capabilities: Multiple departments building agents for similar tasks result in wasted resources on redundant licenses, compute, and API calls.

- Idle agents: Even inactive agents still consume compute cycles and incur cloud costs, adding up over time.

- Troubleshooting costs: When agents malfunction, developers spend excessive time diagnosing issues instead of focusing on innovation.

- Opportunity costs: Resources spent managing AI sprawl detract from strategic initiatives that could drive business growth.

4. Operational Chaos and Workflow Conflicts

Uncoordinated AI Agents cause disruptions in daily operations:

- Conflicting actions: Agents may update the same customer record with conflicting data or process the same support ticket differently, creating confusion.

- Unpredictable workflows: Without knowing which agents are doing what, troubleshooting becomes difficult, and system behavior becomes unpredictable.

- Knowledge silos: Agents operate independently, leading to inconsistent decisions and fragmented insights across teams.

- Trust erosion: Mistakes or conflicts caused by agents undermine trust in AI-driven decisions, impacting future adoption and acceptance.

Understanding these risks makes the stakes clear, but there’s another challenge: the tools and approaches you already have in place probably won’t solve the AI Agent sprawl problem. Here’s why.

Why Traditional IT Controls Aren't Enough for AI Agents

You already have robust security frameworks, access management systems, and monitoring tools. Your IT team has been managing technology for years. So, why aren’t these enough for managing AI Agents?

The truth is, traditional IT controls were designed for a different era – one where software waited for commands, and humans made all the decisions. AI Agents break these fundamental assumptions.

1. The Visibility Gap

Traditional IT monitoring tracks predictable activities, like:

- Software installations and updates

- User logins and access history

- API usage

- Database queries

- Network traffic

But AI Agents don’t operate that way. They make decisions based on constantly changing context, often chaining actions in ways that weren’t explicitly programmed. They generate new queries and requests that your monitoring systems might not anticipate.

Your existing tools may detect API calls, data access, or system changes, but they can’t understand the intent or context behind these actions. For example, an agent accessing customer data could either be fulfilling a legitimate service request or exfiltrating sensitive information. Traditional monitoring systems can’t distinguish between these possibilities without understanding the agent’s purpose and decision-making logic.

You can’t control what you can’t see. With AI Agents, full visibility requires understanding not just what happened, but why it happened and what might happen next.

2. Security Frameworks Built for Different Threats

Standard security controls focus on perimeter defense, user authentication, and preventing unauthorized access. But AI Agents are inherently inside your system, designed to have access to data and resources.

Here’s where the mismatch occurs:

- Traditional access control: Grants specific users access to specific resources based on defined conditions.

- AI Agent reality: Grants autonomous systems access to whatever resources are necessary to complete their assigned tasks, with decisions based on evolving context.

Your security framework can enforce rules, but AI Agents are meant to make intelligent decisions on their own, often in ways that are hard to predict in advance. This autonomy is the core value of AI Agents, but it doesn’t fit neatly into traditional IT security frameworks.

3. The Autonomy Paradox

The core tension with AI Agents is this: Their value comes from autonomy, the ability to make intelligent decisions and take actions without constant human oversight.

However, traditional IT governance is built on control and predictability. You want to know exactly what will happen when someone clicks a button or runs a process. These two goals are fundamentally at odds.

If you restrict agents with too many rules and constant approvals, they lose their autonomy. They become more like traditional automation, less efficient and more expensive. On the other hand, giving them too much freedom makes it impossible to predict, audit, or even explain their actions.

AI Agents are designed to exhibit intelligent, context-aware behavior, which traditional IT controls can’t fully manage or predict.

4. The Audit Trail Challenge

In traditional systems, audit logs are straightforward: User A accessed File B at Time C from Location D. But AI Agents create far more complex audit trails.

For example:

- Agent A triggers Action B, which causes Agent C to query System D and modify Record E, based on Context F influenced by External Data G.

Now, trace the decision logic that started this chain. Why did the agent make each choice? Was the outcome appropriate and compliant?

Traditional audit tools weren’t designed to handle this level of complexity. They track individual actions but fail to trace the full decision-making process across interconnected systems.

5. The Integration Complexity

Traditional IT systems integrate through predictable APIs, with known behaviors. An API is called, data is returned simple.

But AI Agents integrate dynamically, adapting based on the situation. The same agent might call different APIs in varying sequences depending on the context. It may interpret responses differently based on what it’s trying to accomplish.

This flexibility creates emergent patterns that vary based on circumstances, which traditional integration monitoring and governance tools weren’t built to handle. As a result, these tools provide incomplete visibility.

These limitations don’t mean that traditional IT controls are useless. They are still necessary but not sufficient on their own for managing AI Agents. What you need are governance approaches specifically designed for autonomous systems. We’ll explore those next.

Effective AI Agent Governance: Tools, Policies, and Audit Process

Managing AI Agent sprawl requires a fundamentally different approach from traditional IT governance. You need tools and processes specifically designed for autonomous systems that make independent decisions.

1. Start with a Centralized AI Agent Registry

The foundation of AI governance is visibility. Before you can effectively manage AI Agents, you need to know they exist. A centralized AI Agent registry serves as your single source of truth, tracking every agent operating in your organization.

Your registry should track:

- Identity and ownership: Who created this agent? Which department owns it? Who’s responsible for its actions?

- Purpose and scope: What business problem does it solve? What systems and data does it access? What actions can it take?

- Technical configuration: Which AI model powers it? Which platforms and APIs does it connect to?

- Access permissions: What data can it read or modify? What workflows can it trigger?

- Activity status: Is it actively running? When was it last used? Is it delivering value?

- Version history: When was it deployed? What changes have been made? Who approved those changes?

Think of this registry as a Configuration Management Database for your AI Agents. Just as you wouldn’t run IT systems without knowing what servers and apps you have, you can’t govern AI Agents without knowing what they are.

2. Implement Practical Governance Policies

Discovery is just the first step. You need enforceable governance policies that cover the entire lifecycle of an AI Agent, from creation to retirement.

- Pre-deployment approval process: Not all agents require the same level of scrutiny. Low-risk agents (e.g., those that only read data) may be fast-tracked, while high-risk agents (e.g., those handling sensitive data) require a thorough review.

- Evaluation criteria: Assess factors like business value, data access, decision-making authority, security controls, and monitoring needs.

Once deployed, agents need clear operational guardrails:

- Data access limits based on the least-privilege principle

- Rate limiting on API calls and compute usage to prevent runaway costs

- Human-in-the-loop requirements for high-stakes decisions

- Automatic escalation when agents encounter edge cases

Agents should also have a clear lifecycle management policy, including:

- Regular performance reviews

- Automatic flagging of idle agents

- Decommissioning processes for agents no longer delivering value

- Ownership transfer procedures when creators leave

The goal is to enable governance without stifling innovation, quick paths for low-risk agents and appropriate oversight for high-risk ones.

3. Enable Continuous Monitoring

Static governance policies aren’t enough when AI Agents operate continuously. You need real-time monitoring to track what agents are actually doing.

Key monitoring capabilities include:

- Activity dashboards: Track active agents, their usage patterns, and resource consumption. This helps you see at a glance which agents are delivering value and which are just burning resources.

- Behavioral analytics: Understand how agents make decisions and whether they’re operating within expected parameters. Are they accessing data appropriately? Are their outputs consistent with business rules?

- Anomaly detection: Flag unusual behaviors, like unexpected data access or API spikes. Early warnings allow for intervention before small issues escalate.

- Cost tracking: Monitor cloud computing, API usage, and platform fees, attributing them to specific agents to avoid surprise costs.

- Audit trails: Document every action, data access, decision, and context behind agent activities. This is essential for compliance, troubleshooting, and improvement.

4. How to Audit Your Existing AI Agents

If you’re starting from a position of AI Agent sprawl, here’s your systematic audit process:

Phase One: Discovery

Start by identifying all AI Agents in your organization. Use multiple approaches, such as:

- Surveying departments about their AI tools

- Reviewing cloud platform accounts for AI deployments

- Analyzing API logs for agent activity

- Checking SaaS vendor dashboards for AI features in use

- Examining expense reports for AI-related subscriptions

Phase Two: Classification

Once agents are identified, classify them by:

- Purpose, ownership, and risk level

- Overlapping or redundant capabilities

- Dependencies between agents and critical business systems

- Technical quality and maintenance status

Phase Three: Risk Assessment

For each agent, assess:

- Security controls and compliance

- Business value delivered

- Owner accountability and understanding

- Potential impact if the agent fails

Phase Four: Remediation

Take action based on your risk assessment:

- Disable high-risk agents without proper controls

- Consolidate redundant agents

- Implement governance for critical agents

- Decommission underperforming agents

- Document and formally approve agents that meet governance standards

The goal isn’t perfection on day one, it’s about establishing visibility, containing risks, and building sustainable AI Agent governance.

5. Consolidation Strategies That Work

Once you have a clear picture of your agent landscape, look for opportunities to simplify and consolidate:

- Merge overlapping capabilities: Consolidate agents with similar functions across departments. This reduces complexity, improves quality, and cuts costs.

- Standardize platforms: Instead of managing agents across multiple tools, select a few platforms that have built-in governance and security.

- Retire underperforming agents: If an agent hasn’t delivered value recently, decommission it to avoid unnecessary complexity.

- Build orchestration: Rather than duplicating agents, create coordinated multi-agent systems that share context and avoid conflicts.

Implementing these strategies will give you a solid foundation for AI Agent governance and help you manage AI sprawl effectively.

How to Effectively Govern AI Agents and Prevent Sprawl

AI Agent sprawl requires more than just technology, it needs organizational commitment and a cultural shift to establish lasting control.

1. Building Scalable Governance

Effective AI governance isn’t about creating bureaucracy. It’s about building frameworks that allow safe experimentation while preventing chaos.

Define clear autonomy levels: Not all AI agents need the same level of oversight:

- Low autonomy agents: Analyze data and make recommendations but need human approval to act.

- Moderate autonomy agents: Take routine actions within defined parameters, requiring regular monitoring.

- High autonomy agents: Make complex decisions and execute workflows across systems, needing robust governance and continuous oversight.

Tailor your governance intensity to the agent’s autonomy and business impact.

-

Establish decision boundaries: Define what each agent can do:

- What problems can it solve?

- What data can it access?

- What actions can it take autonomously?

- When must it escalate to a human?

These boundaries prevent uncontrolled behavior while allowing agents to function autonomously.

-

Implement dynamic governance: Governance must evolve based on agent behavior:

- Review or decommission dormant agents.

- Reassess access permissions if usage changes.

- Implement additional oversight for high error rates or decreased business value.

2. Creating a Cultural Shift

AI governance requires organizational buy-in and a cultural change:

- Move fast with guardrails: Innovation doesn’t require chaos. Teams can experiment quickly within governed frameworks.

- Shared responsibility: AI governance isn’t just an IT function. Leaders deploying agents must understand they are accountable for both the benefits and risks.

- Demystify AI: Educate leadership and teams about the need for AI management. Autonomous agents are powerful tools but still need operational discipline and continuous improvement.

- Make AI governance a key part of onboarding for technical roles.

- Provide training on AI ethics and risk management for those deploying agents.

- Celebrate proactive teams that identify and address agent risks.

- Ensure governance metrics are visible across the organization to build awareness.

3. Preparing for the Future of Multi-Agent Systems

Today’s AI Agent sprawl is just the beginning. The future will involve multi-agent systems where agents collaborate to achieve complex goals.

What’s coming:

- Agents that communicate and collaborate with each other.

- Agents that delegate tasks to specialized agents.

- Workflows orchestrated across multiple agents with minimal human intervention.

Organizations that establish governance frameworks now will be ready for this future. Those that don’t will face exponentially worse sprawl as agent-to-agent complexity grows.

The window for establishing control isn’t infinite. Every day without AI governance adds more complexity and risk. Acting now is far easier than trying to fix the problem later.

How SculptSoft Helps Enterprises Control Autonomous AI Systems

At SculptSoft, we specialize in helping enterprises manage AI Agent sprawl by implementing tailored governance frameworks that ensure security, compliance, and operational efficiency. We design solutions that allow you to accelerate innovation while maintaining control over your autonomous systems. Whether you’re in healthcare, financial services, or any global enterprise, we create custom AI solutions aligned with industry regulations, such as HIPAA for healthcare and multi-jurisdictional compliance for global businesses.

We help organizations discover and assess their current AI Agent landscape, identifying risks, mapping dependencies, and evaluating business value. Our approach consolidates fragmented solutions into scalable, high-performing agents, reducing complexity and cost. SculptSoft integrates seamlessly with your existing infrastructure, whether on AWS, Azure, Google Cloud, or hybrid environments, ensuring no platform lock-in and full control over your AI architecture.

Our continuous monitoring and optimization services ensure that your agents remain efficient, compliant, and scalable as your needs evolve. With SculptSoft’s custom-built solutions, you gain competitive edge agents tailored to your specific workflows, eliminating the constraints of off-the-shelf solutions. We partner with you, providing ongoing support, training, and consultation to ensure long-term success and the seamless integration of AI governance into your organization.

Conclusion

AI Agent sprawl is a growing challenge that enterprises must address before it leads to chaos. The organizations that succeed will be those that establish governance frameworks early, ensuring both innovation and oversight are balanced.

Uncontrolled autonomous systems lead to security, compliance, and operational risks. In contrast, well-governed AI Agents unlock opportunities for efficiency, better decision-making, and sustainable growth.

The time to act is now before sprawl turns into a crisis. Start by gaining visibility and implementing AI governance policies aligned with your business needs. SculptSoft can help you establish the right framework to keep your AI systems under control while driving innovation.

Contact SculptSoft today to secure a strategic advantage with effective AI governance.

Frequently Asked Questions

What is AI Agent sprawl and why is it a problem for enterprises?

AI Agent sprawl occurs when autonomous AI systems spread across an organization without centralized governance or oversight. This can lead to inefficiency, security vulnerabilities, compliance risks, and operational chaos, making it difficult for enterprises to manage and control these systems effectively.

How does AI Agent sprawl happen in organizations?

AI Agent sprawl happens because AI Agents are easy to deploy, and many teams use low-code or no-code platforms to build and launch them without involving IT or compliance teams. As different departments independently create AI Agents, governance becomes fragmented, leading to sprawl and increasing risks.

What are the risks of AI Agent sprawl in enterprises?

AI Agent sprawl can create several risks, including security vulnerabilities, compliance violations, financial inefficiencies, and operational disruptions. Uncoordinated AI Agents may cause conflicting actions, inaccurate data processing, and unpredictable system behavior, all of which undermine organizational efficiency and trust in AI.

Why are traditional IT controls insufficient for managing AI Agents?

Traditional IT controls are designed for passive systems that require human intervention. AI Agents, however, operate autonomously, making decisions based on context. These systems need dynamic governance frameworks that account for their decision-making logic, access permissions, and real-time actions, which traditional IT controls do not address.

How can enterprises take control of AI Agent sprawl?

To take control, enterprises must implement a centralized AI Agent registry, set clear governance policies, and establish continuous monitoring systems. It’s essential to classify and audit AI Agents regularly, consolidate overlapping functionalities, and create standardized integration processes to reduce complexity and risks.

What tools and processes can help with AI Agent governance?

AI governance requires specialized tools such as activity dashboards, behavioral analytics, and anomaly detection systems. Additionally, organizations must develop practical policies for pre-deployment approval, access control, rate limiting, and lifecycle management to ensure that AI Agents remain compliant, efficient, and secure.